Ethical AI Auditing 2026: A 6-Month Strategy for U.S. Enterprises

The rapid advancement and integration of Artificial Intelligence (AI) into core business operations present unprecedented opportunities for innovation, efficiency, and growth. However, this technological revolution also brings forth complex ethical considerations and potential risks. As U.S. enterprises increasingly leverage AI, the imperative for robust Ethical AI Auditing becomes not just a moral obligation but a strategic necessity. With 2026 on the horizon, the regulatory landscape is evolving, demanding proactive measures to ensure AI systems are fair, transparent, accountable, and free from bias.

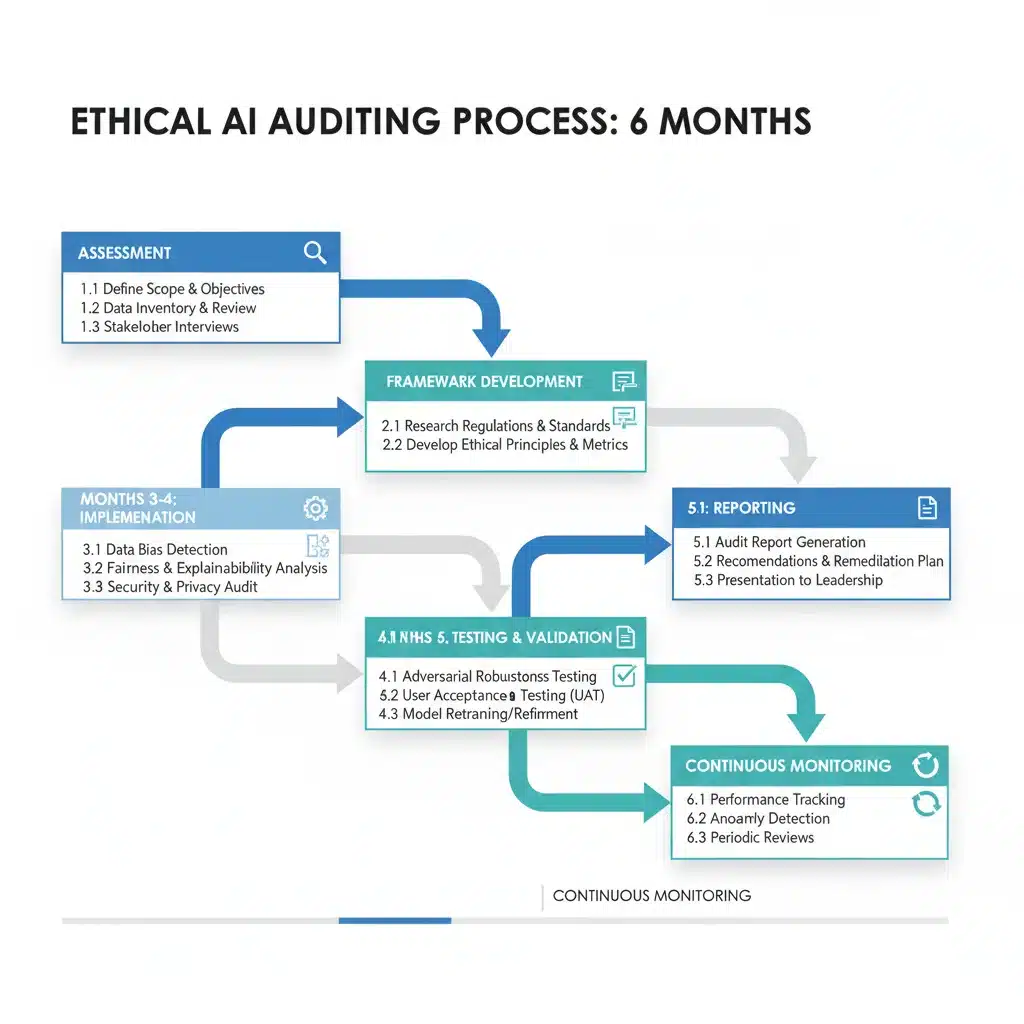

This comprehensive guide outlines a 6-month strategy for U.S. enterprises to establish and implement an effective Ethical AI Auditing framework. Our goal is to equip organizations with the knowledge and actionable steps required to navigate the complexities of AI ethics, mitigate risks, build public trust, and comply with emerging regulations. By embracing a structured auditing approach, businesses can unlock the full potential of AI responsibly.

The concept of Ethical AI Auditing extends beyond mere technical validation. It encompasses a holistic evaluation of AI systems throughout their lifecycle, from design and development to deployment and ongoing maintenance. This includes assessing data provenance, algorithm fairness, interpretability, data privacy, security, and societal impact. For U.S. enterprises, the urgency is amplified by potential federal and state-level regulations, increasing consumer awareness, and the reputational risks associated with AI failures.

The Evolving Landscape of Ethical AI and Regulation

Before diving into the 6-month strategy, it’s crucial to understand the context. The year 2026 is projected to be a pivotal moment for AI regulation in the U.S. While a comprehensive federal framework similar to Europe’s AI Act is still under development, several initiatives are already shaping the environment:

- NIST AI Risk Management Framework (AI RMF): Published in 2023, the NIST AI RMF provides voluntary guidance to manage risks associated with AI. It emphasizes govern, map, measure, and manage functions, offering a foundational structure for ethical considerations.

- Executive Orders and White House Directives: Recent executive orders have highlighted the importance of safe, secure, and trustworthy AI, pushing federal agencies to develop standards and guidelines.

- State-Level Initiatives: States like California, New York, and others are exploring or enacting their own AI-related legislation, particularly concerning data privacy, algorithmic bias in employment, and consumer protection.

- Industry Standards and Best Practices: Beyond government, various industry bodies and consortia are developing sector-specific ethical AI guidelines, reflecting a growing consensus on responsible AI deployment.

These developments underscore that waiting for definitive legislation is no longer a viable strategy. Proactive engagement with Ethical AI Auditing not only prepares organizations for compliance but also positions them as leaders in responsible innovation.

Month 1-2: Foundation & Assessment – Laying the Groundwork for Ethical AI Auditing

The initial two months are critical for establishing a solid foundation. This phase focuses on understanding the current AI landscape within the enterprise, identifying key stakeholders, and defining the scope of the Ethical AI Auditing initiative.

Defining Scope and Objectives

Not all AI systems carry the same ethical risks. It’s essential to prioritize. Begin by:

- Inventorying AI Systems: Create a comprehensive list of all AI systems currently in use or under development across the organization. This should include machine learning models, robotic process automation (RPA) tools, natural language processing (NLP) applications, and more.

- Risk Categorization: Classify each AI system based on its potential ethical impact. High-risk systems might include those involved in critical decision-making (e.g., hiring, lending, healthcare), public safety, or systems handling sensitive personal data. Low-risk systems might be internal tools with minimal external impact.

- Stakeholder Identification: Identify all relevant internal and external stakeholders. Internally, this includes legal, compliance, IT, data science, product development, HR, and executive leadership. Externally, consider customers, regulatory bodies, and advocacy groups.

- Establishing Clear Objectives: What does successful Ethical AI Auditing look like for your organization? Objectives might include ensuring regulatory compliance, mitigating bias, enhancing transparency, building customer trust, or improving system explainability.

Forming an Ethical AI Governance Committee

A dedicated committee is essential for oversight and strategic direction. This committee should be cross-functional, including representatives from legal, compliance, data science, engineering, and business units. Its responsibilities include:

- Defining ethical AI principles aligned with organizational values and emerging regulations.

- Approving the Ethical AI Auditing framework and methodology.

- Overseeing risk assessments and mitigation strategies.

- Ensuring accountability for ethical AI practices.

- Communicating progress and challenges to executive leadership.

Initial Risk Assessment and Gap Analysis

Conduct a preliminary assessment of existing AI systems against established ethical principles and emerging regulatory guidelines. This includes:

- Bias Detection: Identify potential sources of bias in training data, model design, and deployment. This could involve reviewing data collection methods, demographic representation, and historical data patterns.

- Transparency and Explainability: Evaluate the interpretability of AI models. Can decisions made by the AI be understood and explained to stakeholders? Are there mechanisms for auditing model logic?

- Data Privacy and Security: Assess how AI systems handle personal and sensitive data, ensuring compliance with regulations like GDPR, CCPA, and upcoming U.S. federal data privacy laws.

- Accountability Frameworks: Determine if clear lines of responsibility are established for AI system development, deployment, and remediation of ethical issues.

- Impact Assessment: Begin to consider the broader societal impact of your AI systems.

The gap analysis will highlight areas where current practices fall short and where the Ethical AI Auditing strategy needs to focus its efforts.

Month 3-4: Framework Development & Tooling – Building the Ethical AI Auditing Mechanism

With the foundation laid, months three and four are dedicated to developing the specific framework, policies, and tools necessary for conducting robust Ethical AI Auditing.

Developing an Ethical AI Auditing Framework

Based on the initial assessment, design a tailored auditing framework. This framework should be comprehensive and adaptable, incorporating:

- Ethical Principles: Formalize the organization’s ethical AI principles (e.g., fairness, transparency, accountability, privacy, robustness, human oversight).

- Methodology: Define the specific steps and procedures for conducting audits, including data collection, analysis techniques, and reporting formats.

- Metrics and KPIs: Establish quantifiable metrics and Key Performance Indicators (KPIs) to measure ethical performance. For example, fairness metrics (e.g., disparate impact, equal opportunity), explainability scores, and data leakage rates.

- Roles and Responsibilities: Clearly define who is responsible for what in the auditing process, from data scientists to legal counsel.

- Documentation Standards: Mandate clear and consistent documentation for all AI models, including data sources, model architecture, training parameters, and ethical considerations.

Selecting and Implementing Ethical AI Tools

Leverage technology to support your Ethical AI Auditing efforts. This might involve:

- Bias Detection Tools: Software that can analyze datasets and model outputs for demographic or other forms of bias.

- Explainable AI (XAI) Platforms: Tools that help interpret complex AI models, providing insights into their decision-making processes (e.g., LIME, SHAP).

- Data Governance Platforms: Solutions for managing data lineage, access controls, and privacy compliance.

- Model Monitoring Tools: Platforms that continuously monitor AI model performance, drift, and potential ethical violations in real-time.

- GRC (Governance, Risk, and Compliance) Software: Integrate ethical AI considerations into existing GRC platforms to streamline compliance reporting and risk management.

Investing in the right tools can significantly enhance the efficiency and effectiveness of your Ethical AI Auditing program.

Policy and Procedure Development

Translate the framework into actionable policies and procedures. This includes:

- Ethical AI Policy: A high-level document outlining the organization’s commitment to ethical AI and its core principles.

- AI Development Guidelines: Integrate ethical considerations into the AI development lifecycle (e.g., ethical by design principles).

- Data Governance Policies: Specific policies for data collection, storage, usage, and deletion, with a focus on ethical implications.

- Incident Response Plan: A clear plan for addressing and remediating ethical AI failures or breaches.

- Transparency and Disclosure Guidelines: Policies for communicating AI system capabilities, limitations, and ethical considerations to users and the public.

Month 5-6: Implementation & Continuous Improvement – Operationalizing Ethical AI Auditing

The final two months are dedicated to putting the framework into practice, conducting initial audits, and establishing a continuous improvement loop for your Ethical AI Auditing program.

Conducting Pilot Audits

Begin with pilot audits on a select number of high-risk AI systems identified in Month 1-2. This allows for testing the developed framework and identifying any practical challenges. Each pilot audit should involve:

- Data Review: Scrutinizing training data, validation data, and production data for biases, quality issues, and privacy compliance.

- Algorithm Review: Analyzing the AI model’s architecture, training methodology, and performance metrics for fairness, robustness, and transparency.

- Output Analysis: Evaluating the AI system’s decisions and recommendations for ethical implications, potential discrimination, and unintended consequences.

- Stakeholder Interviews: Gathering feedback from developers, users, and affected parties.

- Documentation Review: Ensuring all aspects of the AI system are adequately documented according to established standards.

Reporting and Remediation

Based on the pilot audit findings, generate detailed reports. These reports should:

- Summarize ethical risks identified.

- Quantify the impact of these risks where possible.

- Propose concrete remediation strategies and timelines.

- Assign responsibility for implementing remediation actions.

The Ethical AI Governance Committee should review these reports, approve remediation plans, and track their implementation. This phase is crucial for demonstrating the value and effectiveness of your Ethical AI Auditing.

Training and Awareness Programs

A critical component of operationalizing ethical AI is ensuring that all relevant personnel are educated and aware. Develop and roll out comprehensive training programs for:

- AI Developers and Data Scientists: Training on ethical AI principles, bias mitigation techniques, explainable AI practices, and secure coding for AI.

- Legal and Compliance Teams: Education on emerging AI regulations, ethical risk assessment, and incident response.

- Management and Executives: Awareness of the strategic importance of ethical AI, reputational risks, and the overall governance framework.

- Business Units: Understanding the ethical implications of AI systems they use or propose.

These programs foster a culture of ethical AI throughout the organization, making Ethical AI Auditing a shared responsibility.

Establishing a Continuous Monitoring and Improvement Loop

Ethical AI Auditing is not a one-time event but an ongoing process. Establish mechanisms for continuous monitoring and improvement:

- Regular Audit Cycles: Schedule periodic audits for all AI systems, with frequency determined by risk level.

- Real-time Monitoring: Implement tools for continuous monitoring of AI system performance, fairness metrics, and data drift in production environments.

- Feedback Mechanisms: Create channels for users and stakeholders to report ethical concerns or potential biases in AI systems.

- Policy Review: Regularly review and update ethical AI policies and procedures to adapt to new technologies, regulatory changes, and lessons learned from audits.

- Research and Development: Stay abreast of the latest research in AI ethics, fairness, interpretability, and privacy-preserving AI.

This iterative approach ensures that your organization remains agile and responsive to the dynamic nature of AI ethics.

Key Challenges and Mitigation Strategies in Ethical AI Auditing

Implementing a robust Ethical AI Auditing strategy is not without its challenges. U.S. enterprises may encounter:

- Data Availability and Quality: Lack of diverse, representative, or high-quality data can hinder bias detection and fairness assessments.

- Mitigation: Invest in data governance, develop synthetic data generation techniques, and implement rigorous data validation processes.

- Model Complexity (Black Box Problem): Deep learning models can be difficult to interpret, making explainability a significant hurdle.

- Mitigation: Utilize Explainable AI (XAI) techniques, focus on simpler models where appropriate, and invest in research for model interpretability.

- Lack of Standardized Metrics: Measuring fairness, transparency, and accountability can be subjective and lack universally accepted metrics.

- Mitigation: Adopt industry best practices, participate in standardization efforts, and develop internal consensus on relevant metrics.

- Resource Constraints: Expertise in AI ethics and auditing can be scarce and expensive.

- Mitigation: Upskill existing staff, engage external consultants for specialized expertise, and automate auditing processes where possible.

- Organizational Resistance: Resistance from development teams or business units due to perceived slowdowns or increased complexity.

- Mitigation: Foster a culture of ethical AI from the top down, demonstrate the business value of responsible AI, and involve stakeholders early in the process.

The Business Imperative for Ethical AI Auditing

Beyond compliance and risk mitigation, a strong commitment to Ethical AI Auditing offers significant business advantages for U.S. enterprises:

- Enhanced Trust and Reputation: Organizations known for their ethical AI practices build stronger trust with customers, partners, and the public, leading to increased brand loyalty and positive public perception.

- Competitive Advantage: Companies that proactively address AI ethics can differentiate themselves in the market, attracting ethically conscious consumers and talent.

- Innovation and Growth: By establishing clear ethical boundaries, organizations can innovate more responsibly, avoiding costly missteps and fostering sustainable growth.

- Improved Decision-Making: Ethical AI systems are often more robust, fair, and transparent, leading to better, more reliable business decisions.

- Talent Attraction and Retention: A commitment to ethical AI can attract top talent who are increasingly looking to work for socially responsible organizations.

- Reduced Legal and Financial Risks: Proactive auditing significantly reduces the likelihood of costly lawsuits, regulatory fines, and reputational damage stemming from AI failures.

Conclusion: Charting a Responsible Future with Ethical AI Auditing

The journey to fully integrate Ethical AI Auditing into an enterprise’s operational fabric is complex but undeniably rewarding. By following this 6-month strategic roadmap, U.S. enterprises can systematically build a robust framework that not only addresses current and future regulatory demands but also embeds a culture of responsible AI innovation.

As we approach 2026, the organizations that prioritize ethical considerations in their AI development and deployment will be the ones best positioned for long-term success, resilience, and leadership in the AI-driven economy. Embracing Ethical AI Auditing is not merely about avoiding pitfalls; it is about actively shaping a future where AI serves humanity with fairness, transparency, and accountability. Start your 6-month journey today to ensure your enterprise is not just technologically advanced, but ethically exemplary.