Boost AI Model Accuracy 8% in 3 Months: Advanced Experimental Designs

Boosting AI Model Accuracy by 8% in 3 Months: Advanced Experimental Designs for U.S. AI Development Teams

In the rapidly evolving landscape of artificial intelligence, achieving incremental improvements in model performance can translate into significant competitive advantages. For U.S. AI development teams, the quest for higher accuracy is relentless, driven by the demand for more reliable predictions, robust systems, and efficient operations. This comprehensive guide will delve into advanced experimental designs, offering a roadmap to potentially boost your AI model accuracy by an impressive 8% within a mere three months. We will explore methodologies, practical applications, and strategic considerations essential for achieving this ambitious goal.

The Imperative of AI Model Accuracy in Today’s Market

The stakes for AI model accuracy have never been higher. From autonomous vehicles and medical diagnostics to financial fraud detection and personalized recommendations, the performance of AI models directly impacts safety, profitability, and user experience. Even a small percentage increase in accuracy can lead to substantial gains: reduced errors, enhanced decision-making, and superior product offerings. For U.S. companies operating in a highly competitive global market, optimizing AI model accuracy is not just a technical challenge but a strategic imperative.

Many teams find themselves caught in a cycle of incremental tweaks and ad-hoc experiments, often without a clear, structured approach to improvement. This can lead to diminishing returns, wasted resources, and missed opportunities. The key to breaking this cycle lies in adopting rigorous, data-driven experimental designs that allow for systematic exploration of model parameters, data preprocessing techniques, and architectural choices. Our focus will be on transitioning from reactive problem-solving to proactive, hypothesis-driven experimentation that consistently elevates AI model accuracy.

Understanding the Foundations of Experimental Design in AI

Before diving into advanced techniques, it’s crucial to revisit the core principles of experimental design. In the context of AI, an experiment involves systematically varying certain inputs or configurations of a model and observing the effect on its performance metrics, particularly accuracy. The goal is to identify which changes lead to statistically significant improvements.

Key Principles:

- Control: Isolating the variables you want to test to ensure that any observed changes are due to your modifications and not confounding factors.

- Randomization: Assigning experimental units (e.g., data splits, model configurations) randomly to different treatment groups to minimize bias.

- Replication: Repeating experiments multiple times or across different data subsets to confirm the robustness and generalizability of the results.

- Blinding: In some cases, concealing the experimental conditions from those evaluating the results to prevent conscious or unconscious bias.

While these principles are fundamental, their application in AI often requires sophisticated statistical methods and computational resources. The complexity arises from the high dimensionality of AI models, the vast number of hyperparameters, and the intricate interactions between different components of the machine learning pipeline. Achieving an 8% boost in AI model accuracy within three months demands a more strategic and efficient approach than simple trial-and-error.

Phase 1: Diagnostic Assessment and Baseline Establishment (Month 1)

The first month of your three-month journey should be dedicated to a thorough diagnostic assessment of your current AI model accuracy and identifying the most promising areas for improvement. This phase is critical for establishing a solid baseline and formulating targeted hypotheses.

1. Comprehensive Model Evaluation and Error Analysis:

Start by meticulously evaluating your existing model’s performance. Go beyond aggregate accuracy metrics. Deep-dive into specific error types. Are there particular classes that the model struggles with? Does it perform poorly on certain data subsets (e.g., minority classes, specific demographics, unusual edge cases)? Visualizing confusion matrices, precision-recall curves, and ROC curves for different segments of your data can reveal hidden patterns.

- Quantitative Analysis: Calculate per-class accuracy, F1-scores, and misclassification rates.

- Qualitative Analysis: Manually inspect misclassified instances. What common characteristics do they share? Are there issues with data labeling, feature representation, or model bias?

2. Data Quality and Feature Engineering Audit:

Poor data quality is often the primary bottleneck for AI model accuracy. Conduct a rigorous audit of your training and validation datasets.

- Data Cleaning: Identify and address missing values, outliers, and inconsistencies.

- Labeling Accuracy: Review a sample of your labeled data for errors. Consider implementing double-labeling for critical datasets.

- Feature Relevance: Use techniques like feature importance scores (e.g., from tree-based models, SHAP values) to understand which features contribute most to predictions. Are there redundant or irrelevant features?

- Feature Engineering Potential: Brainstorm and prototype new features that could capture more information or better represent underlying patterns. This often involves domain expertise.

3. Hyperparameter Sensitivity Analysis:

Before launching into complex experiments, gain an understanding of how sensitive your model is to its key hyperparameters. While a full hyperparameter optimization is a later step, a preliminary sensitivity analysis can guide your experimental design.

- One-Factor-at-a-Time (OFAT): While not exhaustive, varying one hyperparameter while keeping others constant can provide initial insights into its impact on AI model accuracy.

- Random Search or Bayesian Optimization (Limited Scope): Perform a limited-scope random search or Bayesian optimization on a few critical hyperparameters to identify promising ranges.

4. Formulating Hypotheses for Improvement:

Based on your diagnostic assessment, articulate specific, testable hypotheses. Instead of vague goals like “improve accuracy,” aim for statements like: “Implementing a new data augmentation strategy X will increase AI model accuracy by Y% for minority classes,” or “Replacing feature set A with feature set B will reduce false positives by Z%.” These hypotheses will guide your experimental designs.

Phase 2: Advanced Experimental Designs for Targeted Improvement (Month 2)

With a solid understanding of your model’s weaknesses and clear hypotheses, Month 2 focuses on deploying advanced experimental designs to systematically test your assumptions and identify impactful changes.

1. Factorial Designs for Hyperparameter Optimization and Feature Interaction:

Instead of OFAT, which misses interactions between variables, factorial designs allow you to test multiple factors (e.g., hyperparameters, feature sets, regularization techniques) simultaneously and understand their combined effects. This is crucial for boosting AI model accuracy efficiently.

- Full Factorial Designs: Test all possible combinations of levels for each factor. While comprehensive, this can be computationally expensive for many factors.

- Fractional Factorial Designs: A more practical approach for AI, where you select a subset of combinations that still allows for estimating main effects and important two-way interactions with fewer runs. This is particularly useful for identifying the most influential factors quickly.

- Response Surface Methodology (RSM): Once key factors are identified, RSM can be used to model the relationship between the factors and the AI model accuracy, helping to find optimal settings within a continuous range. This is excellent for fine-tuning.

Example: If you’re testing learning rate (Factor A), batch size (Factor B), and a new regularization technique (Factor C), a 2^3 full factorial design would test 8 combinations. A fractional factorial design might test 4 combinations, strategically chosen to reveal key insights.

2. Design of Experiments (DOE) for Data Augmentation and Preprocessing Strategies:

Data augmentation and preprocessing are often overlooked sources of significant accuracy gains. DOE principles can be applied here to test different augmentation policies or preprocessing pipelines.

- Taguchi Methods: These robust design methods focus on making the model less sensitive to noise factors (e.g., variations in input data) by optimizing control factors. This can lead to more stable and higher AI model accuracy in real-world scenarios.

- Automated Data Augmentation (AutoAugment, RandAugment): While not strictly a traditional DOE, these techniques automate the search for optimal augmentation policies, essentially running numerous implicit experiments to find the best combination of transformations. Integrating these into your experimental pipeline can accelerate discovery.

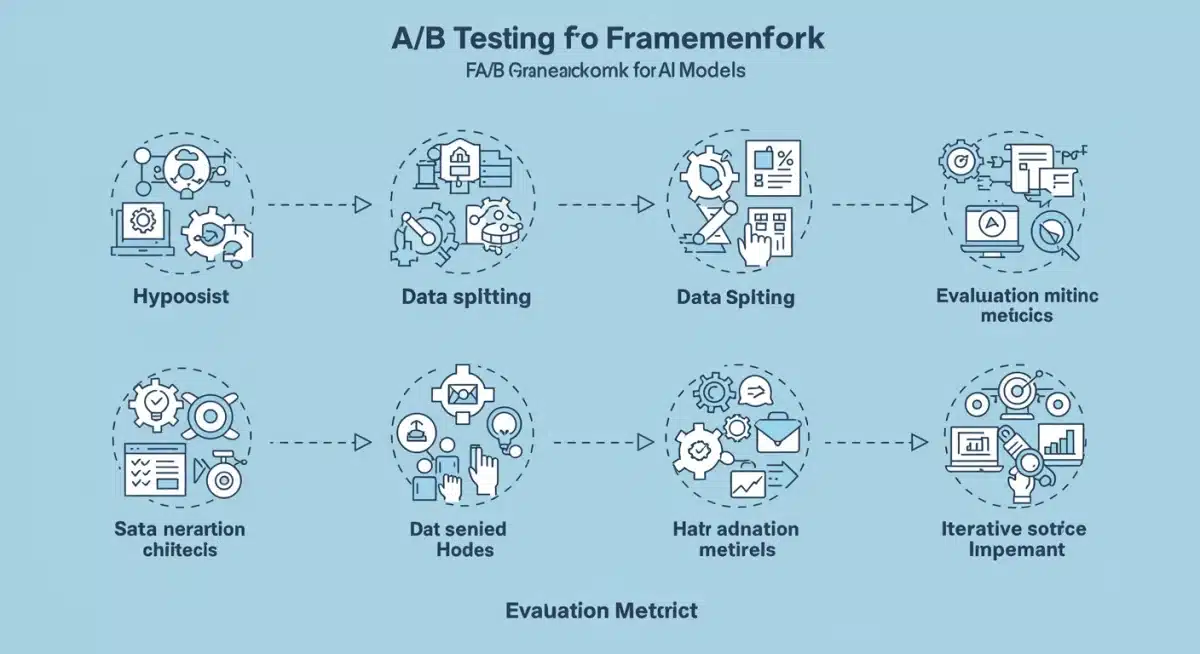

3. A/B Testing and Multi-Armed Bandits for Model Comparisons:

When comparing different model architectures, training regimes, or even entirely different algorithms, A/B testing provides a robust framework. For more dynamic allocation of resources to promising models, consider Multi-Armed Bandits.

- A/B Testing: Split your validation data (or even a small portion of your production traffic, if feasible and safe) and expose different model versions to each group. Statistically compare their performance metrics (e.g., AI model accuracy, latency, resource usage) to determine the superior version. Ensure proper statistical power calculations to detect meaningful differences.

- Multi-Armed Bandits (MAB): For scenarios where you have multiple model candidates or hyperparameter settings and want to dynamically allocate resources (e.g., training time, inference traffic) to the best performing ones over time. MAB algorithms (e.g., UCB, Thompson Sampling) balance exploration (trying new options) and exploitation (using the best-known option), leading to faster convergence to optimal configurations and improving AI model accuracy more efficiently than pure A/B testing in certain contexts.

Phase 3: Iteration, Validation, and Deployment (Month 3)

The final month is about consolidating gains, rigorously validating improvements, and preparing for deployment. This phase ensures that the 8% increase in AI model accuracy is robust and sustainable.

1. Robust Statistical Validation:

Do not rely solely on observed improvements. Use statistical tests to confirm that your accuracy gains are statistically significant and not due to random chance.

- Hypothesis Testing: Apply t-tests, ANOVA, or non-parametric tests (e.g., Wilcoxon signed-rank test) to compare the performance of your new model against the baseline.

- Cross-Validation: Implement k-fold cross-validation or stratified cross-validation to ensure your model’s performance is consistent across different data partitions and to get a more reliable estimate of generalization AI model accuracy.

- Confidence Intervals: Report accuracy with confidence intervals to provide a range of plausible values for the true population accuracy.

2. Ensemble Methods and Model Stacking:

Often, combining multiple models can lead to superior AI model accuracy than any single model alone. This is particularly effective once you have several strong candidates from your experimental phase.

- Bagging (e.g., Random Forests): Training multiple models independently on different subsets of data and averaging their predictions.

- Boosting (e.g., XGBoost, LightGBM): Sequentially building models where each new model tries to correct the errors of the previous ones.

- Stacking: Training a meta-model to learn how to best combine the predictions of several base models. This can be a powerful way to squeeze out additional percentage points of AI model accuracy.

3. Continuous Monitoring and A/B Testing in Production:

The journey to higher AI model accuracy doesn’t end at deployment. Models can degrade over time due to data drift or concept drift. Implement robust monitoring systems.

- Performance Dashboards: Track key metrics (accuracy, precision, recall, F1-score) in real-time. Set up alerts for significant drops in performance.

- Data Drift Detection: Monitor the distribution of incoming data compared to your training data. If significant shifts occur, it may signal a need for retraining or model adaptation.

- Shadow Deployment / Canary Releases: Deploy new, improved models alongside the old ones, routing a small percentage of traffic to the new version to monitor its performance in a live environment before a full rollout. This is a critical step to ensure that the increased AI model accuracy observed in validation sets translates to real-world scenarios.

Key Considerations for U.S. AI Development Teams

Achieving an 8% boost in AI model accuracy requires not just technical prowess but also strategic alignment and operational excellence. For U.S. teams, there are specific factors to consider:

Resource Allocation and Computational Power:

Advanced experimental designs can be computationally intensive. Ensure your team has access to sufficient GPU/TPU resources, cloud computing credits, and efficient MLOps pipelines to manage experiments at scale. Prioritize experiments that offer the highest potential impact based on your initial diagnostic phase.

Team Collaboration and Expertise:

This endeavor requires a multidisciplinary approach. Data scientists, machine learning engineers, domain experts, and even product managers need to collaborate closely. Foster a culture of experimentation, where failures are seen as learning opportunities, and insights are shared across the team to collectively improve AI model accuracy.

Ethical AI and Bias Detection:

As you strive for higher AI model accuracy, it’s paramount to simultaneously address ethical considerations. Ensure that improvements don’t inadvertently introduce or exacerbate biases against specific demographic groups. Monitor fairness metrics alongside accuracy, and consider techniques like adversarial debiasing or fairness-aware training.

Regulatory Compliance and Explainability:

For U.S. industries like healthcare, finance, and legal, regulatory compliance is non-negotiable. Ensure that your experimental designs and model improvements adhere to relevant regulations (e.g., HIPAA, GDPR, CCPA). Focus on model explainability (XAI) to understand why your model is making certain predictions, which can be crucial for auditing and trust, even as you push for higher AI model accuracy.

Practical Steps to Kickstart Your 3-Month Plan

To summarize, here’s a condensed action plan for U.S. AI development teams:

- Week 1-4 (Month 1 – Diagnostic):

- Conduct thorough error analysis and identify top 3-5 areas for AI model accuracy improvement.

- Perform a data quality audit and brainstorm new feature engineering ideas.

- Run preliminary hyperparameter sensitivity tests.

- Formulate clear, testable hypotheses for each improvement area.

- Week 5-8 (Month 2 – Experimentation):

- Implement fractional factorial designs for hyperparameter tuning and feature interaction analysis.

- Design experiments for data augmentation strategies using DOE principles or automated tools.

- Utilize A/B testing or Multi-Armed Bandits to compare promising model architectures or algorithms.

- Iteratively refine models based on experimental results.

- Week 9-12 (Month 3 – Validation & Deployment):

- Rigorously validate best-performing models using statistical tests and extensive cross-validation.

- Explore ensemble methods (bagging, boosting, stacking) to combine top models for incremental gains in AI model accuracy.

- Prepare for deployment with shadow deployment or canary releases.

- Set up continuous monitoring for data drift and model performance in production.

Conclusion

Achieving an 8% boost in AI model accuracy within three months is an ambitious yet attainable goal for dedicated U.S. AI development teams. By moving beyond ad-hoc experimentation and embracing advanced experimental designs—such as factorial designs, Taguchi methods, A/B testing, and Multi-Armed Bandits—teams can systematically identify and implement impactful changes. This structured approach, combined with rigorous statistical validation, continuous monitoring, and a keen eye on ethical considerations, will not only elevate your models’ performance but also establish a robust framework for sustained innovation in the competitive AI landscape. The time to optimize your AI model accuracy is now, and with these strategies, your team is well-equipped to lead the charge.