Ethical AI in Patient Care: Key Frameworks for US Healthcare Providers in 2026

Implementing artificial intelligence in US patient care by 2026 demands adherence to established ethical AI frameworks to ensure responsible deployment, safeguard patient rights, and promote equitable health outcomes across diverse populations.

The landscape of healthcare is rapidly evolving, with artificial intelligence (AI) emerging as a transformative force. However, the integration of AI into clinical practice, especially in the United States, brings forth a complex web of ethical considerations. Understanding and applying ethical AI in patient care: key frameworks for US healthcare providers in 2026 is not just a regulatory necessity but a moral imperative to ensure trust, equity, and superior patient outcomes.

The imperative of ethical AI in healthcare by 2026

As AI technologies become increasingly sophisticated and pervasive, their application in patient care promises revolutionary advancements, from enhanced diagnostics to personalized treatment plans. Yet, this progress is not without its challenges. The ethical implications of AI in healthcare are profound, touching upon issues of patient privacy, algorithmic bias, accountability, and the very nature of the doctor-patient relationship.

In the US, healthcare providers face a unique set of pressures, including stringent regulations, diverse patient populations, and a complex insurance landscape. By 2026, navigating these waters effectively will require a deep understanding of ethical AI principles and the frameworks designed to uphold them. The goal is to harness AI’s power while mitigating potential harms, ensuring that technology serves humanity first.

Addressing algorithmic bias and fairness

One of the most critical ethical concerns in AI is algorithmic bias. AI models trained on unrepresentative or biased datasets can perpetuate and even amplify existing health disparities, leading to inequitable care for certain demographic groups. Ensuring fairness is paramount.

- Data diversity: Actively seek and incorporate diverse datasets that accurately reflect the patient population.

- Bias detection tools: Implement advanced tools to identify and mitigate biases in AI algorithms throughout their lifecycle.

- Regular auditing: Conduct periodic audits of AI systems to ensure they perform equitably across all patient groups.

Ultimately, the imperative extends beyond mere compliance; it’s about fostering a healthcare ecosystem where AI genuinely benefits all patients, regardless of their background, ensuring that technological advancements do not inadvertently exclude or disadvantage vulnerable populations. This proactive approach is fundamental to building trust in AI-driven healthcare solutions.

Key ethical frameworks for AI in US healthcare

Several ethical frameworks are emerging to guide the responsible development and deployment of AI in healthcare. These frameworks provide a structured approach for providers, developers, and policymakers to evaluate, implement, and monitor AI systems. Adherence to these guidelines is crucial for building public trust and ensuring beneficial outcomes.

The core tenets often revolve around principles such as transparency, accountability, non-maleficence, beneficence, and justice. These principles act as a compass, directing the ethical integration of AI into sensitive clinical environments. Understanding each framework’s nuances allows for a tailored approach to AI governance within diverse healthcare settings.

The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems

The IEEE (Institute of Electrical and Electronics Engineers) has been a significant voice in establishing ethical guidelines for AI. Their ‘Ethically Aligned Design’ document offers a comprehensive framework focusing on human well-being, transparency, and accountability. It provides practical recommendations for designers and developers.

- Human rights focus: Emphasizes that AI systems must respect fundamental human rights.

- Data agency: Advocates for individuals to have control over their data.

- Technical accountability: Calls for clear lines of responsibility for AI system performance and impact.

This initiative moves beyond abstract principles, offering actionable guidance that can be directly applied to the development and deployment of AI technologies in patient care. Its global scope ensures a broad perspective on ethical challenges.

Navigating data privacy and security in AI applications

Patient data is the lifeblood of healthcare AI, but its use raises significant privacy and security concerns. Protecting sensitive health information (PHI) is not only an ethical obligation but a legal one, particularly under regulations like HIPAA (Health Insurance Portability and Accountability Act) in the US. AI systems must be designed with robust data protection measures from the outset.

The ethical use of data in AI extends beyond mere compliance; it involves maintaining patient trust and ensuring that data is used responsibly and transparently. Healthcare providers must adopt a ‘privacy-by-design’ approach, integrating data protection into every stage of AI development and deployment.

Ensuring transparency and explainability in AI

AI models, especially complex deep learning algorithms, can often operate as ‘black boxes,’ making it difficult to understand how they arrive at their conclusions. In healthcare, this lack of transparency can be problematic, posing challenges for clinical decision-making, patient trust, and accountability. Explainable AI (XAI) aims to address this issue.

Transparency allows clinicians to critically evaluate AI recommendations and integrate them thoughtfully into patient care. Patients also have a right to understand how AI is being used in their treatment and how it impacts decisions about their health. This fosters a sense of agency and informed consent.

- Model interpretability: Develop AI models that can provide clear, understandable explanations for their outputs.

- Documentation: Maintain thorough documentation of AI model design, training data, and decision-making processes.

- User interfaces: Design user interfaces that present AI insights in an intuitive and transparent manner for healthcare professionals.

By prioritizing transparency, healthcare providers can ensure that AI acts as a valuable assistant rather than an inscrutable oracle, empowering clinicians and patients alike.

Accountability and liability in AI-driven care

One of the most complex ethical and legal challenges posed by AI in healthcare is determining accountability when things go wrong. If an AI system makes an incorrect diagnosis or recommends a flawed treatment, who is responsible? Is it the developer, the clinician, the hospital, or the AI itself?

Establishing clear lines of accountability is vital for patient safety and for building trust in AI technologies. US healthcare providers must proactively address these questions, potentially through new policies, legal frameworks, and insurance mechanisms. This clarity is essential for fostering innovation while simultaneously protecting patients.

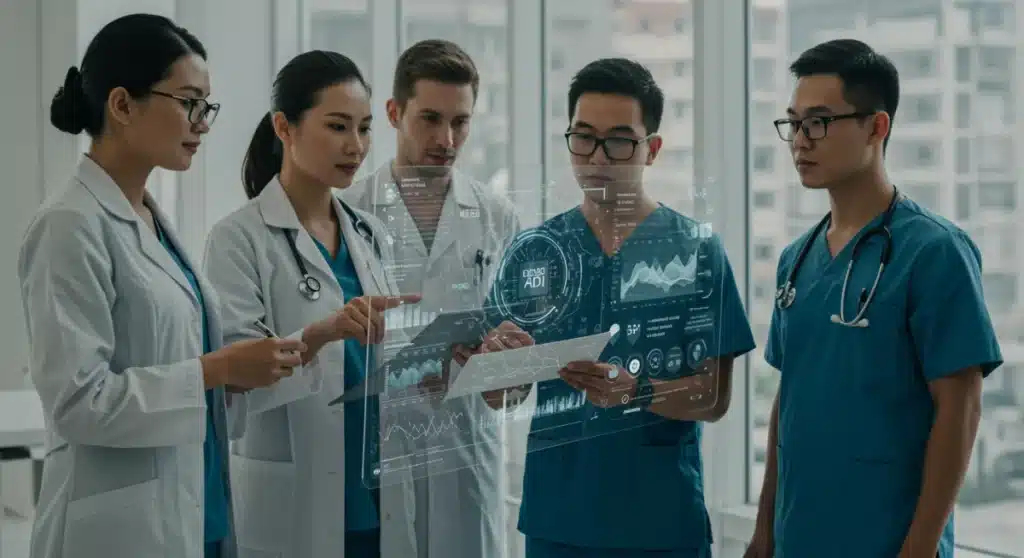

The role of human oversight and clinical judgment

Despite the advanced capabilities of AI, human oversight remains indispensable in healthcare. AI should be viewed as a tool to augment, not replace, human intelligence and clinical judgment. Clinicians must retain the ultimate responsibility for patient care decisions, utilizing AI insights as one component of a comprehensive evaluation.

Effective human oversight requires ongoing training for healthcare professionals to understand AI’s capabilities and limitations. It also necessitates robust protocols for reviewing AI-generated recommendations and intervening when necessary. This symbiotic relationship between human and artificial intelligence is key to ethical AI deployment.

- Continuous training: Educate clinicians on AI functionalities, limitations, and ethical implications.

- Decision support: Position AI as a decision support tool, not a definitive decision-maker.

- Emergency protocols: Establish clear procedures for human intervention when AI systems present anomalies or errors.

By emphasizing human oversight, healthcare systems can ensure that AI enhances care without eroding the critical role of clinical expertise and empathy.

Regulatory landscape and compliance for 2026

The regulatory environment for AI in healthcare in the US is rapidly evolving. By 2026, we can anticipate more refined guidelines and potentially new legislation to address the unique challenges posed by AI. Healthcare providers must stay abreast of these developments to ensure continuous compliance and ethical practice.

Agencies like the FDA (Food and Drug Administration) are actively working on frameworks for AI as a medical device (AI/ML-based SaMD), while other bodies are focusing on data governance and ethical use. Compliance is not merely about avoiding penalties; it’s about upholding the highest standards of patient care and safety.

Emerging standards and best practices

Beyond formal regulations, a growing body of standards and best practices is emerging from professional organizations, industry consortia, and research institutions. These often provide more granular guidance on specific aspects of AI development and deployment, such as data quality, model validation, and post-market surveillance.

Adopting these best practices, even when not legally mandated, can significantly strengthen an organization’s ethical posture and minimize risks. It demonstrates a commitment to responsible innovation and patient well-being, enhancing reputation and trust among patients and stakeholders.

- FDA guidance: Monitor and adhere to FDA guidelines for AI/ML-based medical devices.

- Industry standards: Participate in or follow industry-specific working groups on AI ethics and safety.

- Internal policies: Develop robust internal policies and procedures for AI governance and ethical review.

Proactive engagement with these evolving standards will be critical for US healthcare providers to successfully and ethically integrate AI by 2026.

Fostering a culture of ethical AI in healthcare organizations

Implementing ethical AI frameworks is not solely about policies and regulations; it also requires cultivating a strong organizational culture that prioritizes ethics. This involves leadership commitment, continuous education for all staff, and mechanisms for ethical review and feedback. An ethical culture ensures that AI initiatives are consistently aligned with the organization’s values and mission.

Healthcare organizations must move beyond a reactive approach to AI ethics, instead embedding ethical considerations into every stage of the AI lifecycle, from conception and design to deployment and ongoing monitoring. This proactive stance helps anticipate and mitigate potential ethical dilemmas before they escalate.

Establishing ethical review boards and committees

Dedicated ethical review boards or committees, similar to Institutional Review Boards (IRBs) for human research, can play a crucial role in overseeing AI initiatives. These bodies can evaluate proposed AI projects, assess their ethical implications, and ensure adherence to established frameworks and principles. Their independence and expertise are key to their effectiveness.

These committees should comprise diverse members, including clinicians, ethicists, data scientists, legal experts, and patient advocates. This multidisciplinary approach ensures a holistic consideration of ethical issues, reflecting the varied impacts of AI on different stakeholders.

- Multidisciplinary expertise: Include diverse perspectives on AI ethics committees.

- Regular review cycles: Conduct periodic reviews of AI systems in use to ensure ongoing ethical compliance.

- Feedback mechanisms: Create channels for staff and patients to report ethical concerns related to AI.

By establishing robust ethical review processes, healthcare organizations can demonstrate their unwavering commitment to responsible AI innovation and patient-centric care.

The future of ethical AI integration in US patient care

Looking ahead to 2026 and beyond, the journey of integrating ethical AI into US patient care will be continuous. It will require ongoing adaptation, learning, and collaboration among all stakeholders. The goal is not just to implement AI, but to implement it wisely, ensuring that it genuinely enhances health outcomes, reduces disparities, and upholds the dignity and autonomy of every patient.

The future will likely see more sophisticated ethical AI tools, improved regulatory clarity, and a greater emphasis on participatory design, where patients and communities are involved in shaping the AI solutions that impact their lives. This forward-looking perspective positions ethical considerations not as barriers, but as fundamental enablers of transformative healthcare innovation.

Continuous learning and adaptation

The field of AI is dynamic, with new technologies and applications emerging constantly. Ethical frameworks and guidelines must therefore be flexible and adaptable, capable of evolving alongside technological advancements. Healthcare providers must foster a culture of continuous learning and critical evaluation to navigate this ever-changing landscape.

This includes investing in research on AI ethics, participating in national and international dialogues, and sharing best practices across the healthcare ecosystem. By remaining agile and responsive, the US healthcare system can ensure that AI integration remains ethical, effective, and beneficial for all.

- Research investment: Support studies on the ethical implications and societal impacts of AI in healthcare.

- Cross-sector collaboration: Engage with technology developers, policymakers, and patient advocacy groups.

- Policy iteration: Advocate for and adapt to evolving AI governance policies and regulations.

Ultimately, the successful future of AI in US patient care hinges on a steadfast commitment to ethical principles and a proactive approach to their implementation and refinement.

| Key Ethical Area | Description for 2026 |

|---|---|

| Algorithmic Fairness | Ensuring AI systems do not perpetuate or amplify health disparities based on demographic factors. |

| Data Privacy & Security | Protecting sensitive patient data from breaches and misuse, adhering to HIPAA and emerging regulations. |

| Transparency & Explainability | Making AI decisions understandable to clinicians and patients to foster trust and informed consent. |

| Accountability | Establishing clear responsibility for AI system outcomes and errors within healthcare settings. |

Frequently asked questions about ethical AI in healthcare

Ethical AI is crucial to ensure patient safety, maintain public trust, and prevent exacerbation of health disparities. As AI becomes more integral to diagnostics and treatment, robust ethical frameworks are essential to guide its responsible implementation and protect vulnerable populations from unintended harm or bias.

Primary concerns include unauthorized access to sensitive patient health information (PHI), potential re-identification of anonymized data, and the misuse of data for purposes beyond patient care. Adherence to HIPAA and implementing ‘privacy-by-design’ principles are critical to mitigate these risks and safeguard patient confidentiality.

Addressing algorithmic bias involves using diverse and representative training datasets, employing bias detection and mitigation tools, and regularly auditing AI systems for equitable performance across all patient demographics. Continuous monitoring and human oversight are vital to ensure fairness and prevent discriminatory outcomes in AI-driven decisions.

Determining accountability for AI errors is complex. It often involves shared responsibility among AI developers, healthcare providers, and institutions. Establishing clear policies, legal frameworks, and robust human oversight protocols are necessary to define roles and ensure patient safety when AI systems contribute to adverse events or incorrect diagnoses.

Human oversight is paramount; AI should augment, not replace, clinical judgment. Healthcare professionals must critically evaluate AI recommendations, understand their limitations, and retain ultimate responsibility for patient care decisions. This ensures that AI serves as a supportive tool, enhancing efficiency while preserving the essential human element of medicine.

Conclusion

The integration of AI into US patient care by 2026 represents a monumental leap forward, promising unprecedented efficiencies and improved outcomes. However, this progress must be anchored in a robust ethical foundation. By embracing comprehensive frameworks, prioritizing data privacy, ensuring algorithmic fairness, fostering transparency, and establishing clear accountability, healthcare providers can confidently navigate the complexities of AI. The future of healthcare is intelligent, but more importantly, it must be ethical, ensuring that technology serves the highest good of every patient.