Explainable AI in US Research: 2026 Transparency Best Practices

The Evolving Role of Explainable AI (XAI) in U.S. Research Projects: A 2026 Update on Best Practices for Transparency

In the rapidly advancing landscape of artificial intelligence, the demand for transparency and understanding is paramount. As we navigate 2026, the concept of Explainable AI Research has moved from a theoretical ideal to a critical imperative, particularly within U.S. research projects. The complexity of modern AI systems, often referred to as ‘black boxes,’ presents significant challenges, especially when these systems are deployed in sensitive domains such as healthcare, finance, and national security. This article delves into the current state of XAI in U.S. research, highlighting the best practices for achieving transparency, addressing ethical considerations, and peering into the future of this transformative field.

The journey towards truly explainable AI is not merely a technical one; it’s a multidisciplinary endeavor that intertwines computer science, cognitive psychology, ethics, and policy. The insights gained from XAI are not just about debugging models; they are about building trust, ensuring accountability, and fostering a deeper understanding of how intelligent systems interact with our world. The U.S. research community, recognizing these profound implications, has been at the forefront of driving innovation in XAI, establishing frameworks and methodologies that are shaping global standards.

The Imperative for Explainable AI in 2026

Why has Explainable AI Research become such a critical focus in 2026? The answer lies in the increasing ubiquity and autonomy of AI systems. From predicting disease outbreaks to informing judicial decisions, AI’s influence is expanding. Without explainability, we risk deploying systems whose decisions are opaque, potentially biased, and ultimately untrustworthy. The call for transparency isn’t just coming from researchers; it’s resonating across regulatory bodies, industry leaders, and the general public.

In the U.S., various government agencies and academic institutions are funding and conducting extensive research into XAI. The National Science Foundation (NSF), DARPA (Defense Advanced Research Projects Agency), and NIST (National Institute of Standards and Technology) are among the key players. Their initiatives aim to develop foundational theories, practical tools, and standardized metrics for evaluating the explainability of AI systems. This concerted effort underscores a national commitment to responsible AI development.

The legal and ethical landscapes are also rapidly evolving. New regulations, both proposed and enacted, increasingly mandate some level of explainability for AI systems, particularly those that impact individuals’ rights or well-being. For instance, discussions around algorithmic accountability and the ‘right to explanation’ are gaining traction, pushing researchers to develop methods that can satisfy these emerging requirements. This regulatory pressure further fuels the urgency and importance of Explainable AI Research.

Defining Explainability: What Does it Mean in Practice?

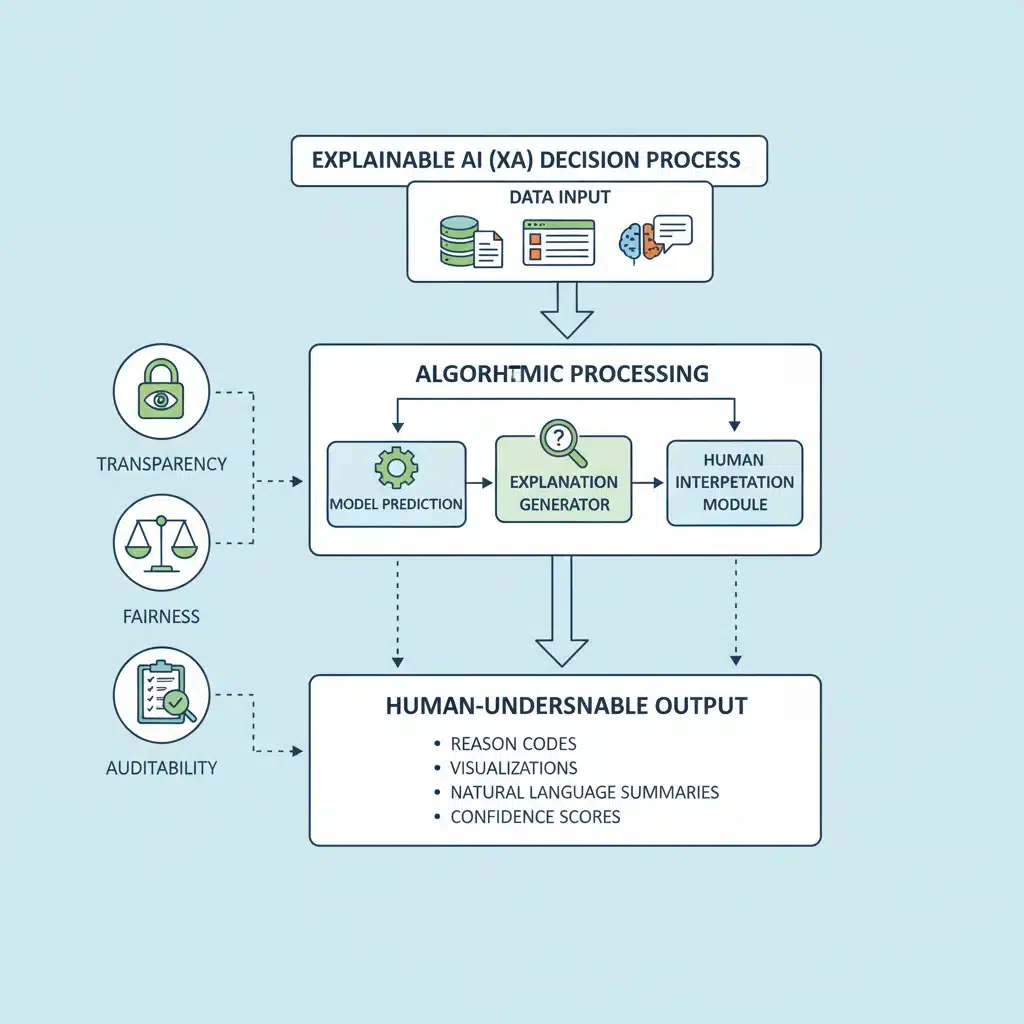

Before diving into best practices, it’s crucial to define what ‘explainability’ truly means in the context of AI. It’s not a monolithic concept, but rather a spectrum of approaches aimed at making AI systems more understandable to humans. This can range from interpreting individual predictions to comprehending the overall behavior of a model. Key aspects include:

- Interpretability: The degree to which a human can understand the cause of a decision.

- Transparency: The ability to inspect the internal workings of a model.

- Fidelity: How accurately an explanation reflects the model’s actual decision-making process.

- Trustworthiness: The confidence users have in an AI system based on its explanations.

- Actionability: The extent to which explanations provide insights that can lead to improvements or interventions.

Different stakeholders require different types of explanations. A data scientist might need detailed insights into model parameters, while a doctor might need to understand why an AI recommended a particular treatment for a patient. Understanding these varying needs is fundamental to effective Explainable AI Research.

Best Practices for Transparency in U.S. XAI Research (2026)

As of 2026, U.S. research projects in XAI are coalescing around several key best practices to enhance transparency. These practices are not static but are continually refined through ongoing research and real-world application.

1. Human-Centric Design of Explanations

A core principle of effective XAI is designing explanations with the human user in mind. This means moving beyond purely technical metrics and focusing on cognitive psychology and user experience. Researchers are increasingly employing user studies to evaluate the effectiveness of explanations, ensuring they are comprehensible, relevant, and actionable for their intended audience.

- Contextual Relevance: Explanations should be tailored to the specific context and the user’s level of expertise. A domain expert will require different types of information than a layperson.

- Interactive Explanations: Providing interactive tools that allow users to probe model decisions, ask ‘what-if’ questions, and explore counterfactuals significantly enhances understanding and trust.

- Visualizations: Effective visual representations of model behavior, feature importance, and decision boundaries are crucial for conveying complex information intuitively.

- Natural Language Explanations: Generating human-readable summaries and justifications for AI decisions is a major area of Explainable AI Research, making AI more accessible to non-technical users.

2. Integrating XAI from Inception

One of the most significant shifts in 2026 is the move towards integrating XAI considerations from the very beginning of the AI development lifecycle, rather than as an afterthought. This ‘explainability-by-design’ approach ensures that transparency is a foundational element, not an add-on.

- Data Understanding: Understanding data biases, provenance, and quality is the first step towards explainable models. XAI research now emphasizes tools for data introspection and bias detection.

- Model Selection: Choosing inherently interpretable models (e.g., decision trees, linear models) where appropriate, or designing complex models with interpretable components.

- Algorithm Development: Developing new algorithms that are intrinsically more explainable, or frameworks that can generate high-fidelity explanations for complex models.

- Evaluation Metrics: Incorporating explainability metrics alongside traditional performance metrics during model training and validation.

3. Diverse XAI Techniques for Diverse Models

No single XAI technique fits all AI models or use cases. U.S. research projects are exploring and refining a wide array of techniques, categorized broadly into local (explaining individual predictions) and global (explaining overall model behavior) methods, and model-agnostic (can be applied to any model) versus model-specific approaches.

- LIME (Local Interpretable Model-agnostic Explanations): A popular technique that explains the predictions of any classifier or regressor by approximating it locally with an interpretable model.

- SHAP (SHapley Additive exPlanations): Based on cooperative game theory, SHAP values provide a consistent and theoretically sound way to explain individual predictions by attributing the impact of each feature.

- Counterfactual Explanations: Identifying the smallest change to the input features that would alter the model’s prediction, providing actionable insights into what circumstances would lead to a different outcome.

- Attention Mechanisms: Increasingly used in deep learning, especially in natural language processing and computer vision, to highlight which parts of the input the model ‘paid attention to’ when making a decision.

- Concept Bottleneck Models (CBMs): These models explicitly identify and reason about human-understandable concepts, offering inherent explainability by design.

The current focus in Explainable AI Research is not just on developing these techniques but on systematically evaluating their effectiveness, robustness, and potential for manipulation.

4. Standardized Evaluation and Benchmarking

A critical challenge in XAI has been the lack of standardized methods for evaluating the quality of explanations. In 2026, significant progress is being made in this area through initiatives like NIST’s AI Risk Management Framework, which emphasizes metrics for transparency and interpretability.

- Quantitative Metrics: Developing metrics to measure fidelity, stability, complexity, and other attributes of explanations.

- Qualitative User Studies: Conducting rigorous human-in-the-loop experiments to assess how well explanations help users understand, trust, and effectively use AI systems.

- Shared Datasets and Tasks: Creating benchmark datasets and common tasks for XAI evaluation to allow for consistent comparison of different techniques.

- Auditing Frameworks: Developing methodologies for auditing AI systems for compliance with explainability requirements, ensuring accountability and mitigating risks.

Ethical Considerations and Responsible XAI Development

Transparency alone is not sufficient for responsible AI. Explainable AI Research in the U.S. is deeply intertwined with ethical considerations, recognizing that explanations can also be misused or misleading. The goal is not just to make AI understandable, but to make it fair, accountable, and beneficial.

Addressing Bias and Fairness

XAI plays a crucial role in identifying and mitigating algorithmic bias. By explaining why a model makes certain predictions, researchers can pinpoint features or data points that contribute to unfair outcomes, leading to more equitable AI systems. However, simply revealing bias isn’t enough; research is also focused on developing techniques to correct it.

- Bias Detection through Explanations: Using XAI to identify discriminatory patterns in data or model behavior.

- Fairness-Aware XAI: Developing explanation methods that explicitly highlight fairness-related issues and suggest interventions.

- Explainable De-biasing: Creating methods to de-bias models while ensuring the de-biasing process itself is transparent.

Accountability and Governance

For AI systems used in high-stakes decisions, accountability is paramount. XAI provides the necessary tools for auditing and understanding why a decision was made, allowing for the assignment of responsibility. This is crucial for legal compliance and public trust.

- Audit Trails: XAI techniques can create comprehensive audit trails of AI decisions, detailing the factors that led to a particular outcome.

- Regulatory Compliance: Explanations are becoming essential for demonstrating compliance with regulations like GDPR (in Europe, influencing U.S. discussions) and sector-specific guidelines.

- Ethical Review Boards: The involvement of ethical review boards and interdisciplinary teams in Explainable AI Research ensures that ethical considerations are embedded throughout the development process.

Preventing Misinformation and Manipulation

An emerging ethical challenge is the potential for explanations to be manipulated or to create a false sense of trust. Researchers are exploring how to make XAI robust against such attacks and how to communicate uncertainties effectively.

- Robustness of Explanations: Developing XAI methods that are stable and consistent, even with minor perturbations to the input.

- Communicating Uncertainty: Explanations must not only convey what the model decided but also the confidence level and potential uncertainties associated with that decision.

- Ethical Guidelines for Explanation Design: Establishing principles for designing explanations that are truthful, unbiased, and do not mislead users.

Challenges and Future Directions in U.S. XAI Research (2026 and Beyond)

Despite significant progress, the field of Explainable AI Research faces several persistent challenges and exciting future directions.

Scalability and Computational Cost

Many XAI techniques, especially those that involve perturbations or complex computations, can be computationally expensive. Scaling these methods to large, real-world AI models without sacrificing performance remains a significant challenge. Future research will focus on developing more efficient and scalable XAI algorithms.

Human-AI Collaboration and Trust

The ultimate goal of XAI is to foster effective human-AI collaboration. This involves not only making AI understandable but also designing interfaces that facilitate seamless interaction and build appropriate levels of trust. Research into human-computer interaction (HCI) and cognitive science will continue to play a vital role.

- Adaptive Explanations: Developing XAI systems that can adapt explanations based on user feedback and changing contexts.

- Trust Calibration: Researching how explanations influence human trust and how to calibrate this trust appropriately to avoid both over-reliance and under-reliance on AI.

- Explainable Reinforcement Learning: A particularly challenging area, as RL agents learn through trial and error, making their decision-making processes inherently difficult to explain.

Multi-Modal XAI

As AI systems become increasingly multi-modal (e.g., combining vision, language, and audio), XAI techniques must evolve to explain decisions based on diverse data types. This involves developing methods that can integrate and explain information from different modalities coherently.

Regulatory Harmonization and Global Standards

While the U.S. is making strides, the global nature of AI development necessitates international collaboration on XAI standards and best practices. Harmonizing regulatory frameworks and research efforts will be crucial for the widespread adoption of responsible AI.

The Role of Causal Explanations

Current XAI often focuses on correlational explanations (e.g., ‘feature X contributed to prediction Y’). A more advanced frontier in Explainable AI Research is causal explanations, which aim to understand why certain features cause specific outcomes. This is critical for scientific discovery and for building truly robust and reliable AI systems.

Causal inference techniques, when integrated with XAI, can move beyond simply identifying influential factors to understanding the underlying mechanisms. This shift promises to unlock deeper insights into complex systems and to facilitate more targeted interventions. For instance, in medical AI, understanding not just that a certain biomarker is associated with a disease, but causally contributes to it, can lead to more effective treatments.

Interdisciplinary Collaboration

The future of Explainable AI Research will increasingly rely on strong interdisciplinary collaboration. Computer scientists, ethicists, legal scholars, social scientists, and domain experts must work together to build AI systems that are not only technically sound but also socially responsible and human-centric. This collaborative approach will ensure that XAI addresses real-world needs and challenges effectively.

Education and Training

As XAI becomes more integral to AI development, there is a growing need for education and training in this area. Universities and research institutions are developing curricula that cover XAI principles, techniques, and ethical considerations. Empowering the next generation of AI developers with XAI knowledge is crucial for embedding transparency and accountability into future AI systems.

Conclusion

The year 2026 marks a pivotal moment for Explainable AI Research in the U.S. The drive for transparency, fueled by technological advancements, ethical imperatives, and regulatory pressures, has solidified XAI’s position as a cornerstone of responsible AI development. Best practices are emerging around human-centric design, early integration of XAI, diverse technique application, and standardized evaluation. While challenges remain in scalability, computational cost, and the pursuit of causal explanations, the commitment from the U.S. research community to address these issues is unwavering.

The evolving role of XAI is not just about dissecting black boxes; it’s about building a future where AI systems are not only intelligent but also trustworthy, fair, and aligned with human values. As U.S. research continues to push the boundaries of what’s possible, the insights gained from Explainable AI Research will undoubtedly shape the global trajectory of AI, ensuring that these powerful technologies serve humanity responsibly and effectively.

The journey is ongoing, but with sustained effort and collaboration, the promise of truly transparent and understandable AI is well within reach, paving the way for a more accountable and beneficial AI-driven future.