Ethical AI Audits: Reduce 2026 Compliance Risk by 15%

Ethical AI audits offer critical insider strategies to proactively identify and mitigate risks, enabling organizations to reduce compliance exposure by a projected 15% in 2026 through enhanced transparency and accountability.

Are you prepared for the complex landscape of AI governance in 2026? The imperative for robust ethical AI audits has never been greater, offering organizations insider strategies to significantly reduce compliance risk by a projected 15% in the coming year. This deep dive will equip you with the knowledge to navigate this evolving domain, ensuring your AI initiatives are not only innovative but also responsible and compliant.

The Rising Imperative of Ethical AI Audits in 2026

As artificial intelligence continues its rapid integration across industries, the ethical implications and regulatory scrutiny are intensifying. Organizations are no longer simply striving for AI efficiency; they are now facing a crucial mandate to ensure their AI systems are fair, transparent, and accountable. This shift underscores the growing importance of ethical AI audits, which are becoming a cornerstone of responsible AI development and deployment.

In 2026, the regulatory environment for AI is anticipated to be more stringent and globally interconnected. Companies operating in the United States, for instance, will need to contend with a patchwork of federal and state regulations, potentially including new guidelines from entities like NIST and emerging state-specific AI laws. These audits are not merely about compliance; they are about building trust with consumers, stakeholders, and regulatory bodies, which directly impacts market reputation and long-term viability.

Understanding the Evolving Regulatory Landscape

The legal framework surrounding AI is a moving target, making proactive auditing essential. New legislation and industry standards are continually being introduced to address concerns about bias, privacy, and algorithmic transparency. Staying ahead means understanding these changes and integrating them into your audit processes.

- NIST AI Risk Management Framework: Expect increased adoption and enforcement of frameworks like NIST’s, providing a blueprint for managing AI risks.

- State-Specific AI Laws: California, New York, and other states are pioneering AI legislation, creating varied compliance requirements.

- International Harmonization Efforts: Efforts to align global AI regulations will influence local compliance strategies, requiring a broader perspective.

The proactive adoption of ethical AI audits positions an organization not just as compliant, but as a leader in responsible innovation. By anticipating regulatory shifts and embedding ethical considerations from the outset, companies can avoid costly penalties, reputational damage, and foster a culture of trust around their AI products and services.

Establishing a Robust AI Governance Framework

A successful ethical AI audit begins with a strong governance framework. This framework acts as the backbone, defining roles, responsibilities, policies, and procedures for the entire AI lifecycle. Without a clear governance structure, even the most well-intentioned audit efforts can fall short, leading to inconsistencies and gaps in compliance. In 2026, a fragmented approach to AI governance will simply not suffice.

Developing this framework involves cross-functional collaboration, bringing together legal, technical, ethical, and business stakeholders. Each group contributes unique perspectives and expertise, ensuring that the governance structure is comprehensive and addresses all potential areas of risk. This integrated approach helps to embed ethical considerations into every stage of AI development, from ideation to deployment and monitoring.

Key Components of Effective AI Governance

Establishing clear guidelines for data handling, model development, and deployment is paramount. This includes defining acceptable risk tolerances and establishing clear pathways for addressing ethical dilemmas as they arise. A robust framework ensures that ethical considerations are not an afterthought but an integral part of the AI development process.

- Defined Roles and Responsibilities: Clearly assign who is accountable for AI ethics, risk management, and compliance across the organization.

- Policy Development: Create comprehensive policies covering data privacy, algorithmic fairness, transparency, and human oversight.

- Continuous Monitoring Protocols: Implement systems for ongoing monitoring of AI models in production to detect and address drift or emerging biases.

- Stakeholder Engagement: Establish mechanisms for engaging with internal and external stakeholders on AI ethics and impact.

By effectively establishing and maintaining a robust AI governance framework, organizations lay the groundwork for effective ethical AI audits. This proactive stance not only minimizes compliance risks but also cultivates a culture where ethical considerations are a core value, driving responsible innovation and fostering public trust.

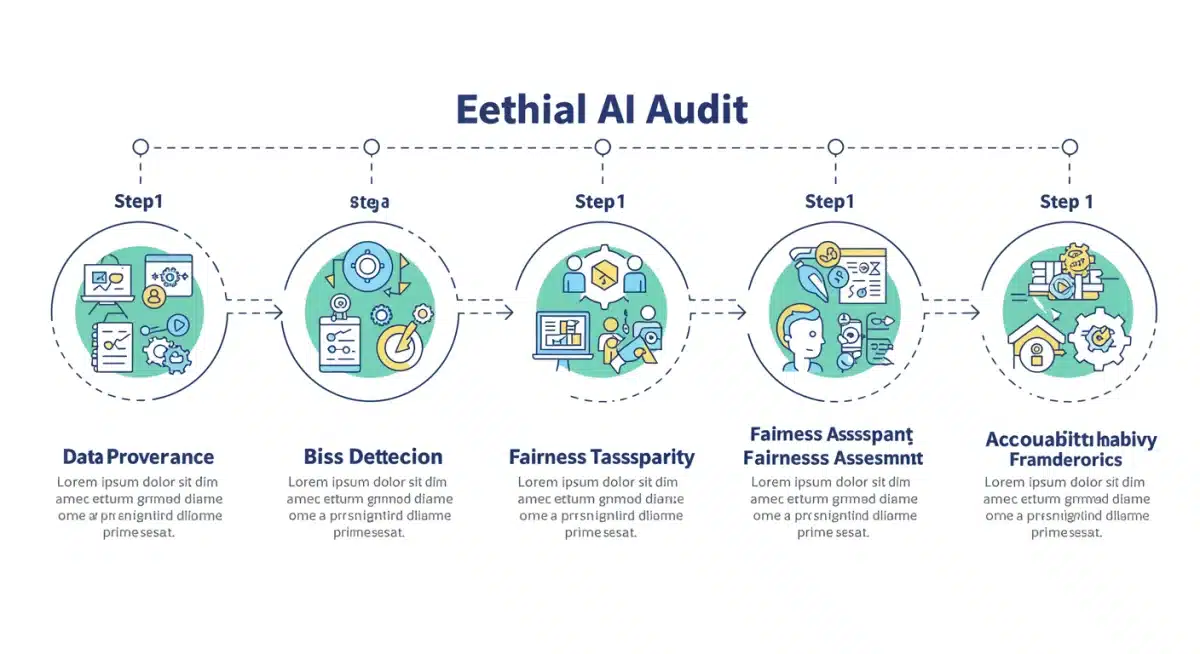

Insider Strategies for Proactive Bias Detection and Mitigation

Bias in AI systems remains one of the most critical challenges, threatening fairness, equity, and compliance. Insider strategies for proactive bias detection and mitigation are essential for any effective ethical AI audit. These strategies move beyond reactive measures, aiming to identify and address potential biases early in the development cycle, significantly reducing downstream risks and costs.

Understanding the sources of bias is the first step. These can range from biased training data to flawed algorithmic design or even how models are deployed and interpreted. In 2026, advanced techniques for identifying subtle biases will be crucial, requiring a blend of statistical analysis, domain expertise, and human judgment. Simply relying on aggregated metrics might mask biases affecting minority groups or specific demographics.

Advanced Techniques for Identifying Algorithmic Bias

Leveraging specialized tools and methodologies can uncover hidden biases that might otherwise go unnoticed. This includes employing fairness metrics that go beyond simple accuracy, and using techniques like counterfactual explanations to understand how model outputs change with slight input variations. The goal is to provide a comprehensive view of how an AI system behaves across different scenarios and user groups.

- Fairness Metrics: Implement a diverse set of fairness metrics (e.g., demographic parity, equalized odds) to assess disparate impact on different groups.

- Explainable AI (XAI) Tools: Utilize XAI techniques to understand model decisions and identify potential discriminatory pathways.

- Adversarial Testing: Employ adversarial attacks to intentionally probe models for weaknesses and biases under stress conditions.

Once identified, biases require targeted mitigation strategies. This could involve re-balancing datasets, adjusting model architectures, or implementing post-processing techniques to correct for unfair outcomes. Continuous monitoring and re-auditing are vital to ensure that mitigation efforts remain effective as models evolve and new data is introduced, cementing the integrity of ethical AI systems.

Ensuring Algorithmic Transparency and Explainability

Algorithmic transparency and explainability are foundational pillars of ethical AI. Regulators and consumers alike are demanding a clearer understanding of how AI systems arrive at their decisions, especially in high-stakes applications like lending, hiring, or healthcare. Achieving this transparency is a complex technical and communication challenge, but one that is indispensable for reducing compliance risk in 2026.

Transparency doesn’t necessarily mean revealing proprietary code; rather, it involves providing meaningful insights into an AI model’s logic, data dependencies, and decision-making processes. This often requires translating complex mathematical operations into understandable terms for non-technical stakeholders. Insider strategies focus on developing layered explanations that cater to different audiences, from data scientists to legal teams and end-users.

Implementing Explainable AI (XAI) Solutions

The field of Explainable AI (XAI) offers a suite of tools and methodologies designed to make AI models more interpretable. These solutions can range from model-agnostic techniques that explain any black-box model to model-specific approaches that provide deep insights into particular AI architectures. The key is to select and implement XAI tools that are appropriate for the complexity and criticality of the AI system being audited.

For example, in a credit scoring AI, XAI tools could explain why a particular loan application was denied, highlighting the specific factors that contributed to the decision. This not only aids compliance with anti-discrimination laws but also builds trust with applicants. Organizations must invest in both the technology and the expertise to effectively implement and communicate these explanations.

The journey towards full algorithmic transparency is ongoing. It requires a commitment to continuous improvement, integrating feedback from audits and user experiences to refine explanation capabilities. By making AI systems understandable, organizations not only meet regulatory demands but also unlock new opportunities for debugging, improving, and innovating their AI solutions responsibly.

Developing Robust Accountability Frameworks

In the event of an AI-related error or harm, knowing who is accountable is paramount. Developing robust accountability frameworks is a critical, yet often overlooked, aspect of ethical AI audits. These frameworks establish clear lines of responsibility, ensuring that there are mechanisms for redress and learning from mistakes. Without them, the promise of ethical AI remains unfulfilled, increasing compliance risk.

An effective accountability framework goes beyond simply identifying the ‘responsible’ party. It encompasses processes for incident reporting, impact assessment, and corrective actions. It also considers the various layers of responsibility, from the data scientists who build the models to the executives who approve their deployment. This multi-faceted approach ensures that accountability is distributed appropriately across the organization.

Establishing Clear Lines of Responsibility

Defining roles and responsibilities early in the AI lifecycle is crucial. This includes designating individuals or teams responsible for data quality, model validation, ethical review, and post-deployment monitoring. These roles should be clearly documented and communicated throughout the organization, preventing ambiguity when issues arise.

- AI Ethics Committee: Form a dedicated committee to oversee ethical considerations and provide guidance on complex AI decisions.

- Incident Response Protocols: Develop clear procedures for reporting, investigating, and resolving AI-related incidents and harms.

- Audit Trails: Implement comprehensive logging and audit trails to track AI model development, deployment, and performance changes.

Beyond internal mechanisms, accountability frameworks must also consider external stakeholders. This includes establishing clear communication channels for affected individuals to report concerns and seek remedies. By proactively building these frameworks, organizations demonstrate a commitment to responsible AI, fostering trust and significantly mitigating compliance risks associated with AI failures or ethical breaches.

Future-Proofing Your Ethical AI Audit Strategy for 2026 and Beyond

The landscape of AI ethics and compliance is dynamic, demanding a future-proof audit strategy. What works today might be insufficient tomorrow. Organizations must adopt a forward-thinking approach, anticipating emerging risks and integrating adaptive mechanisms into their ethical AI audit processes. This means embracing agility and continuous learning as core tenets of their strategy.

Future-proofing involves more than just staying updated on regulations; it requires an understanding of technological advancements, societal expectations, and evolving ethical norms. For instance, the rise of generative AI and foundation models introduces new complexities regarding intellectual property, synthetic data bias, and misuse potential. Audit strategies must evolve to address these cutting-edge challenges effectively.

Adopting a Continuous Auditing Paradigm

Moving away from one-off audits, organizations should embrace a continuous auditing paradigm. This involves integrating automated tools and regular assessments throughout the AI lifecycle, rather than just at deployment. Continuous monitoring allows for the early detection of issues, reducing the cost and complexity of remediation.

Furthermore, investing in talent development and cross-functional training is critical. Ethical AI auditing requires a blend of technical, legal, and ethical expertise. Building internal capabilities and fostering a culture of ethical awareness will ensure that your organization can adapt to future challenges and maintain its commitment to responsible AI innovation. The goal is to embed ethical considerations so deeply that they become an intrinsic part of every AI project, rather than an external imposition.

| Key Point | Brief Description |

|---|---|

| Evolving Regulations | Anticipate stricter AI laws and frameworks (NIST, state-specific) by 2026. |

| Bias Mitigation | Implement proactive strategies using fairness metrics and XAI to detect and correct bias. |

| Algorithmic Transparency | Provide clear explanations of AI decisions for compliance and trust-building. |

| Continuous Auditing | Shift to ongoing monitoring and adaptive strategies for future AI risks. |

Frequently Asked Questions About Ethical AI Audits

An ethical AI audit is a systematic evaluation of an AI system to ensure it aligns with ethical principles and regulatory requirements, such as fairness, transparency, accountability, and privacy. It identifies potential biases, risks, and non-compliance issues within the AI lifecycle, from data collection to deployment and monitoring.

By 2026, regulatory scrutiny on AI is expected to intensify significantly. Ethical AI audits are crucial for reducing compliance risk by proactively addressing issues like bias and lack of transparency. They help organizations avoid legal penalties, reputational damage, and foster public trust in their AI initiatives, ensuring long-term viability.

Reducing AI compliance risk by 15% in 2026 involves implementing robust AI governance frameworks, proactive bias detection and mitigation strategies, enhancing algorithmic transparency through XAI, and establishing clear accountability frameworks. Continuous auditing and a culture of ethical AI are also key to achieving this reduction.

A strong AI governance framework includes defined roles and responsibilities, comprehensive policy development for data and algorithms, continuous monitoring protocols for deployed AI models, and mechanisms for active stakeholder engagement. It provides the foundational structure for ethical AI development and auditing practices.

Explainable AI (XAI) plays a vital role in ethical audits by making complex AI model decisions understandable. It helps auditors identify potential biases, assess fairness, and ensure transparency by providing insights into why an AI system produced a particular output. This is crucial for both regulatory compliance and building user trust.

Conclusion

The journey towards responsible AI in 2026 is inherently linked to the effectiveness of ethical AI audits. By embracing insider strategies that prioritize robust governance, proactive bias detection, algorithmic transparency, and clear accountability, organizations can not only reduce their compliance risk by a significant 15% but also build a foundation of trust and innovation. The future of AI demands a commitment to ethics, ensuring that technology serves humanity responsibly and sustainably.